I’m just saying, nobody in Australia will shed a tear if she stopped existing.

I’m just saying, nobody in Australia will shed a tear if she stopped existing.

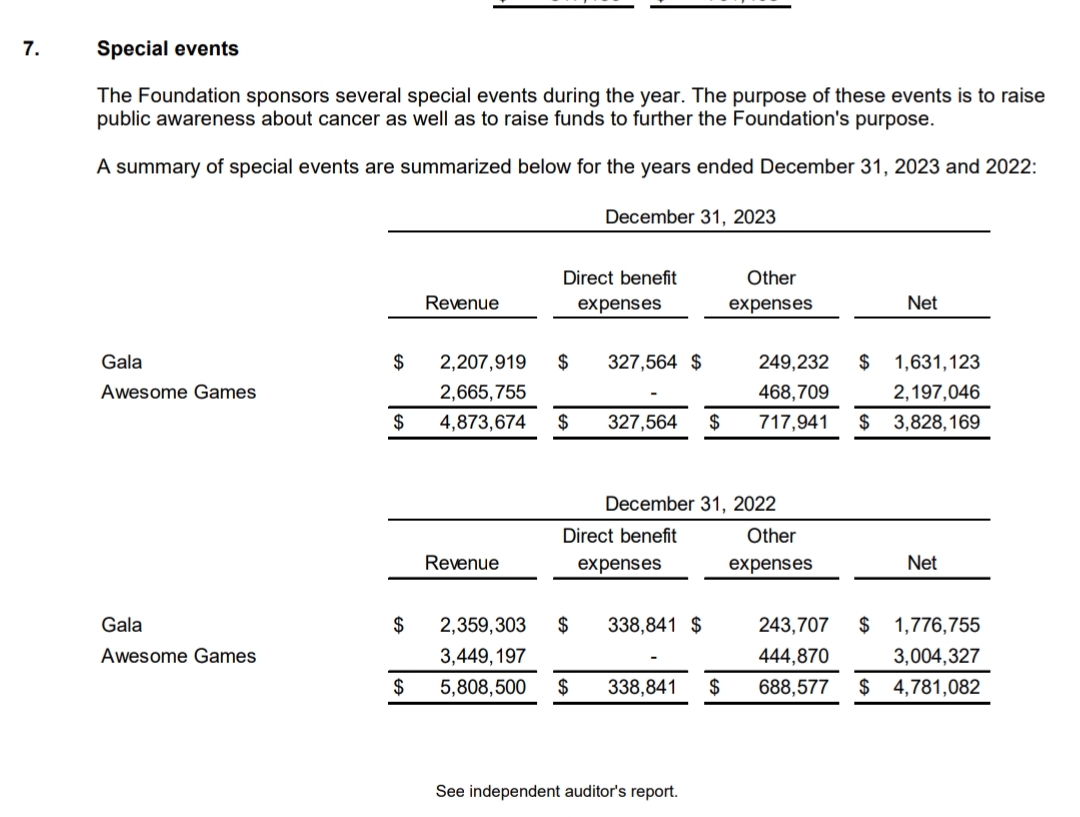

The charity is real, and it took me about 2 minutes to search for their 22-23 audited financial records here https://preventcancer.org/about-us/financials/financial-statements-and-990-forms/

If you go to page 16 you can see the event notes for AGDQ for those two years.

I spent an additional couple of minutes using the propublica lookup. https://projects.propublica.org/nonprofits/organizations/521429544

I’m far from an expert sorry, but my experience is so far so good (literally wizard configured in proxmox set and forget) even during a single disk lost. Performance for vm disks was great.

I can’t see why regular file would be any different.

I have 3 disks, one on each host, with ceph handling 2 copies (tolerant to 1 disk loss) distributed across them. That’s practically what I think you’re after.

I’m not sure about seeing the file system while all the hosts are all offline, but if you’ve got any one system with a valid copy online you should be able to see. I do. But my emphasis is generally get the host back online.

I’m not 100% sure what you’re trying to do but a mix of ceph as storage remote plus something like syncthing on a endpoint to send stuff to it might work? Syncthing might just work without ceph.

I also run zfs on an 8 disk nas that’s my primary storage with shares for my docker to send stuff, and media server to get it off. That’s just truenas scale. That way it handles data similarly. Zfs is also very good, but until scale came out, it wasn’t really possible to have the “add a compute node to expand your storage pool” which is how I want my vm hosts. Zfs scale looks way harder than ceph.

Not sure if any of that is helpful for your case but I recommend trying something if you’ve got spare hardware, and see how it goes on dummy data, then blow it away try something else. See how it acts when you take a machine offline. When you know what you want, do a final blow away and implement it with the way you learned to do it best.

3x Intel NUC 6th gen i5 (2 cores) 32gb RAM. Proxmox cluster with ceph.

I just ignored the limitation and tried with a single sodim of 32gb once (out of a laptop) and it worked fine, but just backed to 2x16gb dimms since the limit was still 2core of CPU. Lol.

Running that cluster 7 or so years now since I bought them new.

I suggest only running off shit tier since three nodes gives redundancy and enough performance. I’ve run entire proof of concepts for clients off them. Dual domain controllers and FC Rd gateway broker session hosts fxlogic etc. Back when Ms only just bought that tech. Meanwhile my home “ARR” just plugs on in docker containers. Even my opnsense router is virtual running on them. Just get a proper managed switch and take in the internet onto a vlan into the guest vm on a separate virtual NIC.

Point is, it’s still capable today.

I’m not sure if this is satire, but America isn’t everyone. :)

Steve Gibson’s InControl probably a no research needed option https://www.grctech.com/incontrol/details.htm

I’ve been listening to the security now podcast for about 8 years, so my trust of him and yours might vary. The website looks like web 1.0 because it is.

I like people doing challenge runs and speed runs, and occasionally letsgameitout who spends 1000 hours committing to the bit that I might have considered as a passing funny joke. I’d rather watch that then some evening ad riddled free to air.

I’m well over 30, not far from 40. Hobbies can be entertaining like watching someone else race a car. I ain’t gonna be able to do that. But I appreciate those who can.

I watch games about the game in gaming, but I also watch games about my game when I’m not gaming. Therefore by definition my gaming will be less than my watching gaming.

Amazing

I thought this was an onion article.

It’s solving a real problem in a niche case. Someone called it gimmicky, but it’s actually just a good tool currently produced by an unknown quantity. Hopefully it’ll be sorted or someone else takes up the reigns and creates an alternative that works perfectly for all my different isos.

For the average home punter maybe even up to home lab enthusiast, probably not saving much time. For me it’s on my keyring and I use it to reload proxmox hosts, Nutanix hosts, individual Ubuntu vms running ROS Noetic and not to mention reimaging for test devices. Probably a thrice weekly thing.

So yeah, cumulatively it’s saving me a lot of time and just in trivialising a process.

If this was a spanner I’d just go Sidchrome or kingchrome instead of my Stanley. But it’s a bit niche so I don’t know what else allows for such simple multi iso boot. Always open to options.

At this point we want antivirus and anticheat out of windows kernel. Microsoft killing access to it will genuinely fix Linux compatibility issues.

It couldn’t be more win-win.

Microsoft is trying to test that approach. The company tested restricting kernel access to third party security vendors in the past, with Vista OS in 2006, but had to backtrack the move.

Symantec and McAfee then claimed Microsoft’s decision to shut off access to the kernel amounts to “anti-competitive behavior.”

Without kernel access, this software may struggle to perform in-depth behavioral analyses of processes and applications, to meet its objectives, said Varkey. “Blocking this access can limit the software’s ability to detect and prevent sophisticated attacks.”

They can’t be trusted, kick out everyone’s access to the kernel. Everyone must use API and that can be interpreted.

Yes but they’re hoping a percentage of people don’t go looking for news that their game has been removed and they need to take action to get a refund.

They remove it from the store automatically, but don’t refund automatically.

Though I bet there may be an argument that the payment platform being Apple means it’s opaque to square, it’s still always customers losing. Even if it’s just a percentage.