Seems like SecuROM won’t install from playing singleplayer:

https://www.gog.com/forum/fear_series/gogs_blatant_lies_regarding_securom_in_fear/page4

#nobridge

Seems like SecuROM won’t install from playing singleplayer:

https://www.gog.com/forum/fear_series/gogs_blatant_lies_regarding_securom_in_fear/page4

That sounds reasonable and I can understand the decision.

theguardian.com only gave us this for context:

The chorus of Conte’s empowerment anthem contains the phrase “serving kant” – a queer or drag slang phrase roughly meaning “to express boldness”.

With the abundance of languages in the EU I imagine that allows us to find quite many words that are phonetically similar to rude words of other languages.

Wouldn’t it be fun to see if it’s only the English language that are afforded such privileges of denial?

On the other hand I don’t really care what happens in the eurovision song contest.

The very same.

They’re lobbying the EU for backdoors in e2ee so they can sell their tech stack to scan all our private communication. https://www.thorn.org/solutions/for-platforms/

Ah yes, the scanner software company Thorn that’s trying to lobby chat control into existence in the EU. Sorry but I don’t trust them or their surveys.

That said, spreading deepfakes of others without their consent is obviously wrong.

Nevermind, it’s been abandoned by the company that contributed the most to it.

https://lwn.net/Articles/882460/

I haven’t tried it myself but there is libreoffice online

https://www.libreoffice.org/download/libreoffice-online/

https://hub.docker.com/r/libreoffice/online/

Their site works fine without allowing javascript, that way it turns into quite a simple thing too!

Obsidian Entertainment has gone from Fallout: New Vegas where you were free to kill anyone, even at the cost of disrupting main quests, to Outer Worlds where most of that freedom is still intact to Avowed where the freedom to do evil choices is either taken from you (npcs not reacting to being shot in the face) or having no impact (npcs ignoring your stealing of money and food in the tavern).

I agree with your thought that it’s a directorial choice, not attention to detail, but it’s one that goes in the complete opposite direction of what the studio is known for.

As someone who loves the freedom of games like TES:Morrowind, Fallout: New Vegas and the Outer Worlds this was a great way to make me lose interest in Avowed. That friendly NPCs doesn’t react at all when you steal in front of them or when you shoot them in the face sucks big time.

Does the distro I pick matter?

Packages

When you install a distro it will have repositories of apps that you can easily install and easily keep updated using either the GUI (GNOME Software for GNOME, Discover for KDE) or the package manager in terminal (dnf in Fedora, apt in kubuntu and mint). It’s similar to how you install apps on a smartphone.

The good thing about the apps from the default repository is that they’re (in theory) tested to work well with the distro.

You can also install applications from other sources when necessary.

Update Frequency and new tech

Another difference is how new kernel and software you get from the repos.

The latest Debian Stable runs kernel 6.1 while Fedora just updated to 6.12 and arch has been running 6.12 since december.

If you’re running the newest hardware then the chance of having drivers available automatically increases with a newer kernel.

Company-run distros and alternatives:

In my opinion Ubuntu is the ones doing the most forcing as of now, and even they are angels compared to Microsoft.

Fedora had discussions about including opt-out Telemetry to aid them getting data to improve the distro. They listened to community feedback and backpedaled that into opt-in metrics:

https://fedoraproject.org/wiki/Changes/Telemetry

https://fedoraproject.org/wiki/Changes/Metrics

Debian and Arch are both examples of distros without enterprise involvement and that have no upstream distro that can affect their releases.

Map of distros here: https://upload.wikimedia.org/wikipedia/commons/1/1b/Linux_Distribution_Timeline.svg

Stability of the distro:

Of your frontrunners I’ve only run Fedora but that has been stable and been working well for me for my primary PC. So has Debian which I run on my servers (I have a Debian VM running Portainer for dockers, one for running Jellyfin and a third for Forgejo).

Monitor support

Multi monitor support

I don’t have the desktop space for double monitors personally, but I’ve heard that KDE 6 (Plasma) handles multi monitor support well.

HDR

Should be working since November

Nvidia is a whole lot simpler to use than people make it sound like, though I’ll stay team red:

https://rpmfusion.org/Howto/NVIDIA#Current_GeForce.2FQuadro.2FTesla

Fedora guide for Nvidia drivers unless you’re running a really old card:

sudo dnf update -y # Update your machine and reboot

sudo dnf install akmod-nvidia # Installs the driver

sudo dnf install xorg-x11-drv-nvidia-cuda #optional for cuda/nvdec/nvenc support (required for Davinci Resolve)

Does that mean that you consider the temporary loss of her voice the same harm as if she would’ve lost access permanently?

Do keep in mind I do not believe the banning to be ok either - but I’d rather have a company where the human factor sometimes fails that can properly undo their mistake and apologize than something like Meta where you cannot even get in touch with a human if something gets flagged.

The extreme of a company that never does a mistake would of course be the best but that’s never going to happen.

I hope for the self hosted solution that @singletona@lemmy.world mentioned to become reality, both for people like Joyce and because it would be a step towards self hosted voice assistants for those of us that refuse to use cloud based ones.

When I first asked Sophia Noel, a company representative, about the incident, she directed me to the company’s prohibited use policy.

There are rules against threatening child safety, engaging in illegal behavior, providing medical advice, impersonating others, interfering with elections, and more.

But there’s nothing specifically about inappropriate language. I asked Noel about this, and she said that Joyce’s remark was most likely interpreted as a threat.

[…]

Joyce doesn’t hold a grudge—and her experience is far from universal.

Jules uses the same technology, but he hasn’t received any warnings about his language—even though a comedy routine he performs using his voice clone contains plenty of curse words, says his wife, Maria.

He opened a recent set by yelling “Fuck you guys!” at the audience—his way of ensuring they don’t give him any pity laughs, he joked.

That comedy set is even promoted on the ElevenLabs website.Blank says language like that used by Joyce is no longer restricted.

“There is no specific swear ban that I know of,” says Noel.

That’s just as well.

And then she got an apology and got her account reinstated by ElevenLabs.

Regarding HoudiniFX it seems they have Linux installs, and a free (with watermark) version for hobbyists - https://www.sidefx.com/products/houdini-apprentice/

Other than that I’d say Blender is the goto app, showing up as one of the most popular apps in the Discover app.

My recommendation would be to use clonezilla or a similar tool to make an image of your windows install and save that on the external ssd.

Then I would install Fedora KDE or whatever’s your poison on the internal drive.

If you wanna switch back to windows then you can always use clonezilla, or your tool of choice, to restore the image.

You could also use KVM/Qemu in your linux distro to restore the image into a windows vm.

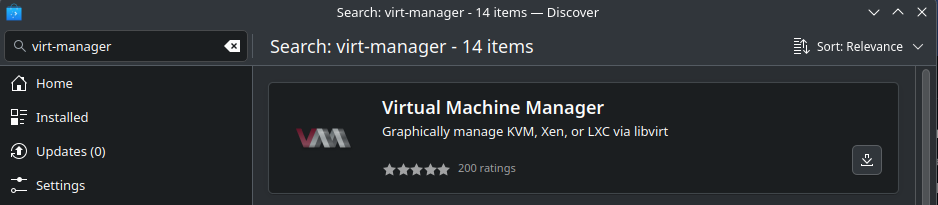

virt-manager gives you a desktop gui while cockpit + cockpit-machines gives you a nice webui for handling virtual machines in linux.

Clonezilla guide, for both linux and windows

https://www.linuxbabe.com/backup/how-to-use-clonezilla-live

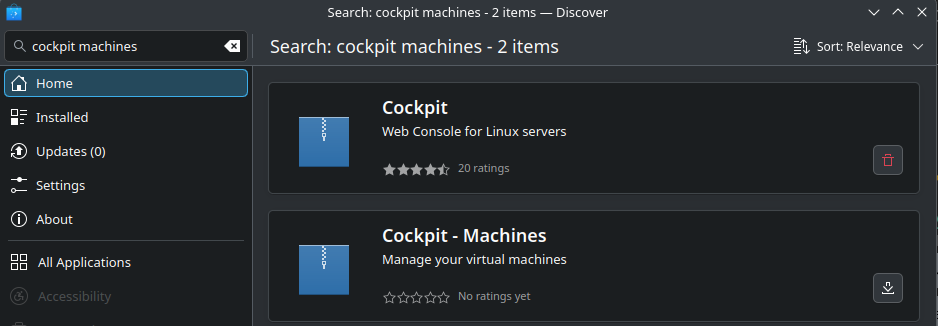

Both Cockpit and Virt-Manager are available in Fedora KDE’s Discover app if you prefer GUI installs:

Cockpit

Virt-Manager

I’m a big fan of using https:// in Mull on my Android, and in Firefox on my PC.

Never been a fan of installing more apps then necessary.

Linux Routing Fundamentals

Linux has been a first class networking citizen for quite a long time now. Every system running a Linux kernel out of the box has at least three routing tables and is supporting multiple mechanisms for advanced routing features from policy based routing (PBR), to VRFs(-lite), and network namespaces (NetNS). Each of these provide different levels or separation and features, with PBR being the oldest one and VRFs the most recent addition (starting with kernel 4.3).

This article is the first part of the Linux Routing series and will provide an overview of the basics and plumbings of Linux routing tables, what happens when an IP packet is sent from or through a Linux box, and how to figure out why. It’s the baseline for future articles on PBR, VRFs, and NetNSes, their differences as well and applications.

Civ4 is the one I still play. I like my stacks of doom and could never get into the hexagons and no stacking units of later games.

Shooter had a hunting license and used his own hunting weapon for the attack. He had no known affiliations with criminals and had no criminal records of his own. He had no income during 2023 according to his tax statements.

Source https://www.svt.se/nyheter/inrikes/det-har-vet-vi-om-misstankte-garningsmannen-i-orebro

And in more languages than just Swedish too.

English version of the brochure.

More in English here: https://www.msb.se/en/advice-for-individuals/